Yahoo RYOT Lab · Smithsonian Institution · 3D Pipeline · AR / Game

Museum-grade assets.

Real-time performance.

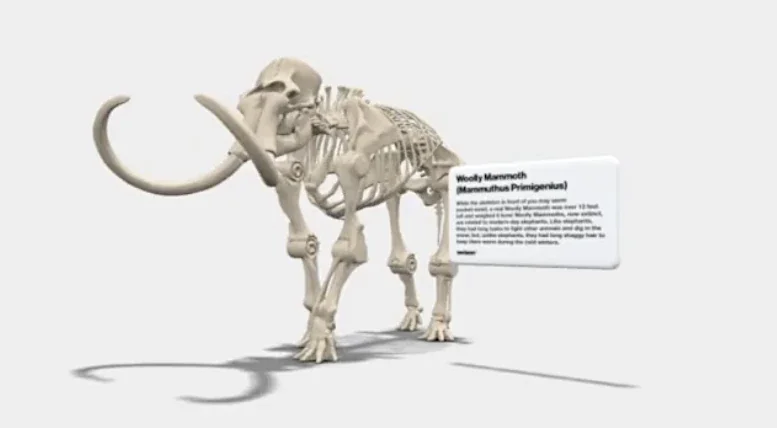

A conversion and optimisation pipeline built at Yahoo RYOT Lab in collaboration with the Smithsonian Institution — taking high-fidelity 3D museum assets and making them viable for augmented reality and game engine deployment. Starting weight: ~5.5MB per asset. Ending weight: something a phone could actually load without filing a complaint.

The challenge

The Smithsonian Institution holds one of the largest and most significant collections of 3D-scanned artefacts in the world. The scans are meticulous, historically important, and — by the standards of real-time rendering — absolutely enormous. A museum-quality 3D asset is built for accuracy, not for a mobile GPU running an AR session in someone's living room.

The task at Yahoo RYOT Lab was to bridge that gap: build a pipeline that could take Smithsonian assets at their native weight (~5.5MB) and produce versions optimised for augmented reality and game engine deployment — without losing the character, detail, and historical fidelity that made them worth using in the first place.

This is harder than it sounds. Polygon reduction is mechanical. Knowing which polygons to keep is not.

The pipeline

01 — Ingestion & analysis

Each asset enters the pipeline at its native resolution. The first stage analyses geometry complexity, UV coverage, texture density, and material structure — building a profile that informs the optimisation decisions downstream. No two assets are treated identically.

02 — Geometry optimisation

Mesh decimation guided by surface curvature and visual importance — preserving detail where it matters (edges, distinctive features, surface character) and reducing aggressively where it doesn't (flat interior faces, occluded geometry, redundant topology). The target is perceptual fidelity, not polygon parity.

03 — Texture rebaking

High-resolution textures rebaked to AR-appropriate resolutions with normal maps carrying the surface detail lost in decimation. The normal map does the work the geometry no longer can — preserving the impression of complexity at a fraction of the cost.

04 — Floor shadow baking

A dedicated tool within the pipeline for baking plausible floor shadows — the contact shadow and ambient occlusion that ground an AR object in its environment. Without it, objects float. With it, they sit. It is a small detail that makes an enormous perceptual difference.

05 — Format export

Final output in formats appropriate for AR deployment and game engine ingestion — with LOD variants where necessary and validated against target platform constraints before leaving the pipeline.

Assets in action

Results

~5.5MB → AR-ready

Native museum-scan assets reduced to real-time viable sizes without perceptible loss of visual character.

Reproducible pipeline

Fully procedural — any new Smithsonian asset can be processed through the same pipeline without manual intervention per asset.

Floor shadow tool

Custom baking tool producing contact shadows that convincingly ground assets in AR environments across a range of surface types.